Project “Human.Machine.Culture – Artificial Intelligence for the Digital Cultural Heritage”

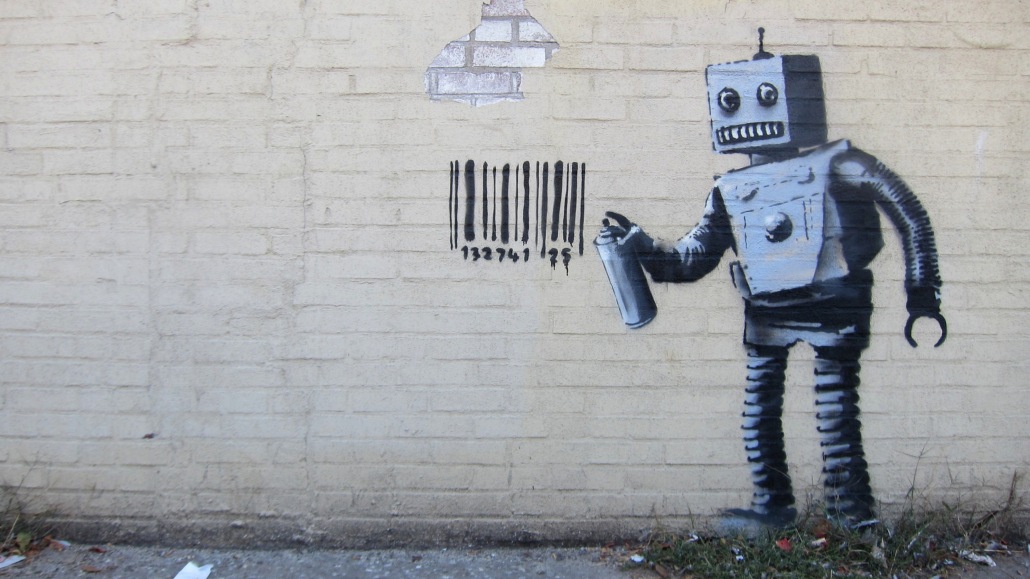

© Scott Lynch/Flickr – CC BY-SA 2.0

Basic project information and background

Cultural heritage institutions such as libraries, archives and museums have digitised an enormous number of objects over the last 25 years. These digitised items are mostly available as scans only, i.e. in an image format. In order to make this data usable for research, but also for the cultural and creative sectors, the contents of this digitised cultural heritage must be extracted from the images. For example, texts and their layout need to be recognised and made available in machine-readable formats so that a computer can work with this content. This is where the project “Human.Machine.Culture” comes in, by using artificial intelligence (AI) methods. The word “machine” here stands for machine learning, a sub-area of artificial intelligence.

This research project is funded by the Federal Government Commissioner for Culture and the Media (BKM, project grant no. 2522DIG002) and carried out by the Berlin State Library (SBB); the latter has, as one of Germany’s leading cultural heritage institutions, not only large amounts of data (digitised books, but also library metadata and images), but also the necessary know-how in the field of artificial intelligence. Therefore, intelligent document analysis methods are being developed in this project to extract full texts and structural data (such as tables of contents) from the digital collections, which is non-trivial for texts printed in broken letter fonts such as e.g. Fraktur. Furthermore, illustrative elements are extracted from the images of scanned books, classified and indexed for similarity searches. The subject indexing of works – a classic library activity – shall be supported by semi-automatic procedures. Finally, large data sets (text, images, metadata) will be compiled as offerings for research and AI applications and made publicly accessible. The project duration is three years (2022-2025), the results achieved will be published here on the project website on an ongoing basis.

Project objectives (sub-projects and work packages)

The project “Mensch.Maschine.Kultur – Künstliche Intelligenz für das digitale kulturelle Erbe” (Human.Machine.Culture – Artificial Intelligence for the Digital Cultural Heritage) consists of four sub-projects that pursue different objectives in coordination with each other and combine them with the appropriate AI procedures.

Sub-project 1 “Intelligent methods for generic document analysis” provides AI methods for document analysis with the aim to obtain high-quality full texts and structural data that have been extracted from the various information contained in the digitised collections (text, image, layout). This work package therefore goes beyond the recognition of texts, separates image elements and analyses the layout to enable the structured representation of texts such as those in newspapers and magazines.

Sub-project 2 “Image analysis tools for digital cultural heritage” extends the work begun in the predecessor project Qurator on image similarity search through recognition, extraction and classification of digital image content.

Sub-project 3 “AI-supported content analysis and subject indexing” assists the experts in the specialist departments of the Berlin State Library with semi-automated procedures for subject indexing and systematically involves their expertise. Fully automated procedures for the recognition of entities such as persons, places and organisations will support the search within material from the digitised collections in the library’s discovery system.

Sub-project 4 “Data provision and curation for AI” bundles and documents data that have been specifically prepared for research and use in AI contexts, and makes these datasets publicly available for subsequent use. In addition, guidelines on how to identify and deal with qualitatively or ethically problematic holdings and content are developed in collaboration with the wider community.

A short project presentation can also be found on the website of Berlin State Library (over here).

All results from the Mensch.Maschine.Kultur project are made available as open source, open data and open access for free re-use. Find out more here.